It's 9:47 p.m. The manager has just "saved eight hours" with a chatbot. The deck has been completed with a 'solid' plan. The manager hits send, and the document lands in someone else's inbox.

The next morning,

- An analyst spends two hours fact-checking it.

- A domain expert rewrites the parts that are technically wrong but plausible.

- Legal deletes the risky sentences.

- Security asks where the data came from.

- Someone adds footnotes that the model "invented" the data.

- But, nobody calls this "rework".

This is the productivity paradox that Casey Newton wrote about: managers say AI makes them more productive; workers mostly don't feel it.

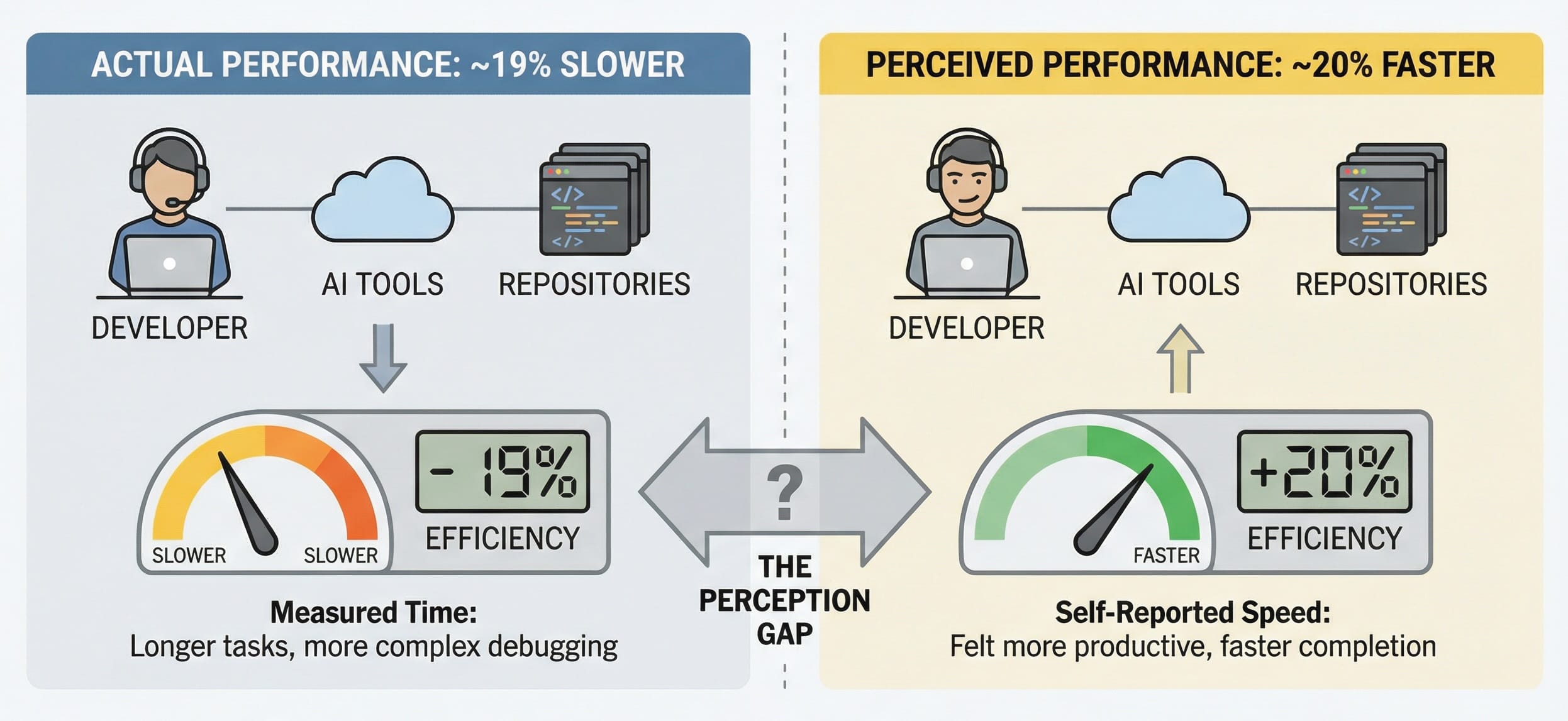

METR ran a randomized controlled trial with experienced open-source developers working on their own repositories, and found that developers were ~19% slower when using AI tools. But those same developers believed AI made them ~20% faster!

According to Workday's research, employees report saving time with AI, but a big chunk gets eaten by rework - reviewing, correcting, verifying low-quality AI output.

PwC's CEO survey found 56% of companies reported no financial gains from AI so far, and only a small slice reported the "jackpot" of both higher revenue and lower costs.

Workslop - "AI-generated work content that masquerades as good work, but lacks the substance to meaningfully advance a given task".

— Platformer

Most of the bubble talk around AI stems from the fact that organizations are unable to turn "capacity" into "outcomes".

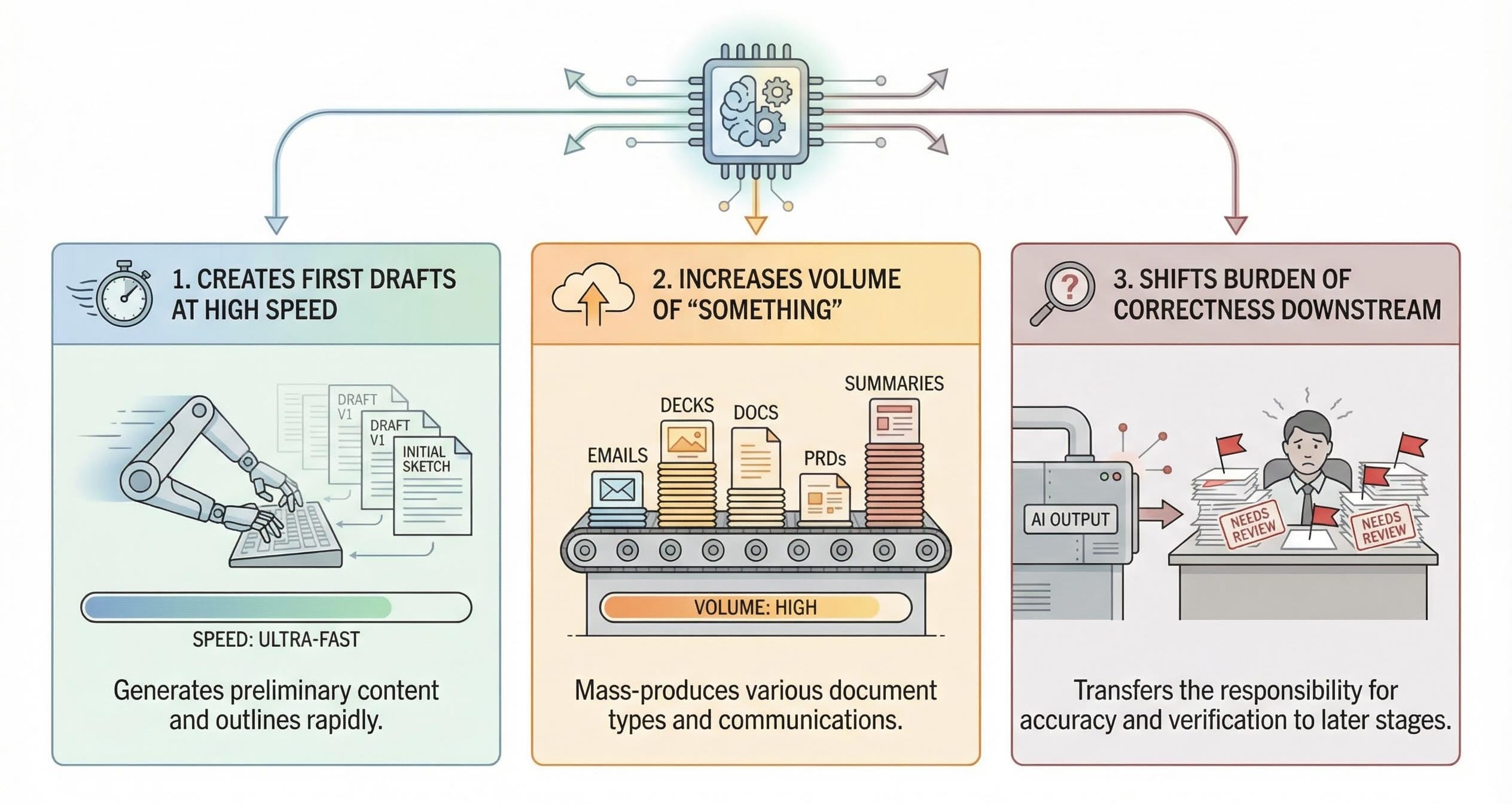

The hidden mechanism: AI doesn't remove work - it moves it

Most current workplace AI usage does one of three things:

- Creates first drafts at high speed

- Increases the volume of "something" (emails, decks, docs, PRDs, summaries)

- Shifts the burden of correctness downstream

If you are the person generating output, it feels like magic.

If you are the person responsible for the output being correct, it feels like unpaid QA.

What we think "real productivity" looks like

If you want AI to produce real gains, you have to stop treating it like a content machine and start treating it like a system inside a workflow.

That means three uncomfortable moves:

- Measure net outcomes, not gross time saved Time saved is meaningless if it gets spent verifying and repairing. If you can't measure cost per correct outcome, you're not measuring productivity - you're measuring activity.

- Design for "verification as a feature", not an afterthought Developers didn't get slower because the model was stupid. They got slower because they didn't trust the output enough to ship it without review. Trust isn't a vibe. It's a pipeline: provenance, retrieval discipline, citations, guardrails, and clear escalation paths.

- Stop rewarding the system for looking helpful The most expensive failure mode in enterprise AI is confident wrongness. If the incentives reward speed and verbosity, you will get speed and verbosity - plus rework. If the incentives reward "ask when uncertain", you get fewer disasters and less hidden labor.