I was doom-scrolling for something to play in the background - one of those nights where you want noise, not insight - and I clicked The Joe Rogan Experience #2440: "Matt Damon & Ben Affleck".

About thirty minutes in, Ben started sounding less like an actor and more like an ops lead who's seen a Generative AI system fail in production!

He called AI writing "shitty" because it "goes to the mean". He argued the tech is "leveling off" and went on to say that the hype is driven by economics - valuations that need to justify data-center capex.

It was a surprisingly accurate description of the three constraints that actually govern AI in the real world - the world Riafy lives in.

Here's my thesis: Affleck's "AI reality check" distills into three curves -

- Output quality

- Inference cost

- Deployment friction

The industry keeps arguing about quality, while cost and deployment quietly decide what ships.

The future of AI will be shaped by systems that stay reliable, affordable, and survive integration.

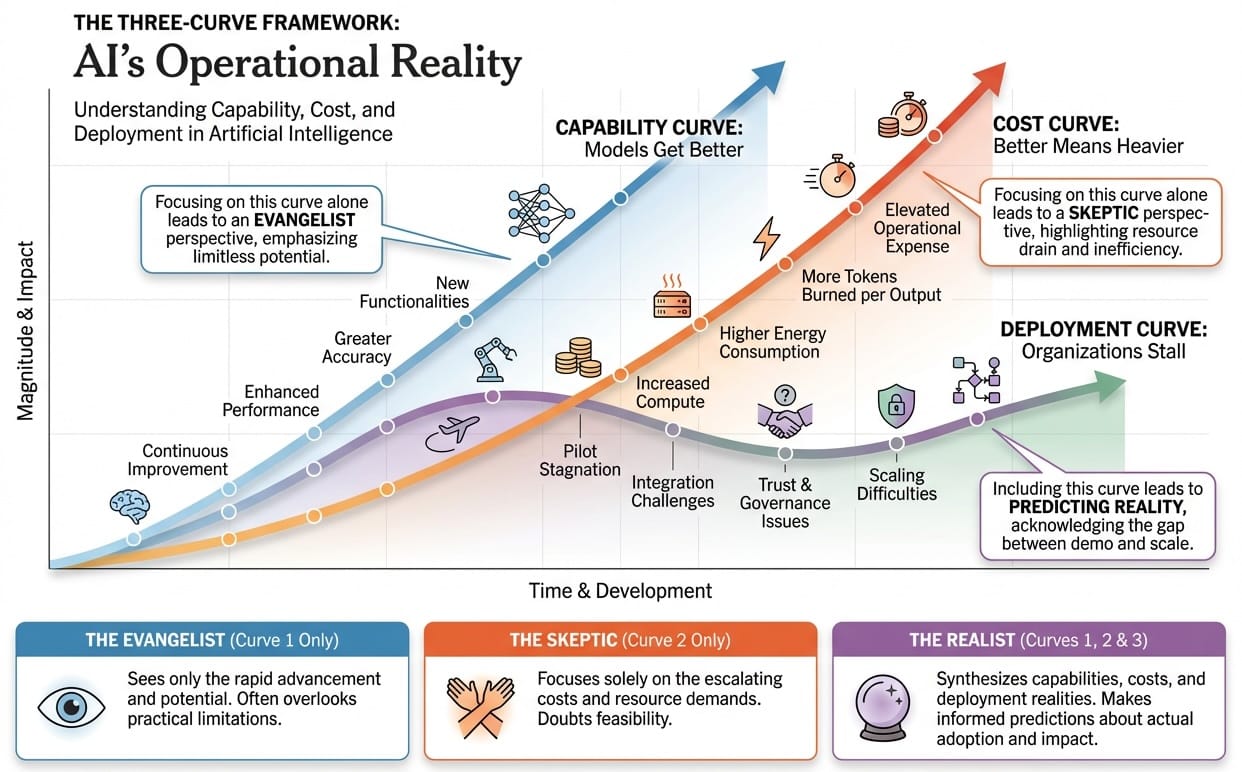

The Three-Curve Test

Every AI conversation I've been in the last few years follows the same arc. Someone demos something impressive. Everyone nods. Then six months later, it's still not in production - and no one can quite explain why.

The gap isn't intelligence. It's economics.

Here's the framework that makes it visible:

- Quality curve: Models are getting better, sometimes drastically.

- Cost curve: "Better" models come with more tokens, more compute, more energy, and higher unit economics per task.

- Deployment curve: Organizations can't turn pilots into production because trust, governance, and workflow fit don't scale like demos do.

Simply put,

Quality goes up. Cost goes up faster. Deployment lags.

If someone's AI roadmap doesn't mention all three curves, it's not a roadmap. It's a pitch deck looking for the next funding round.

Let's take a closer look at Ben Affleck's claims

Claim 1: AI is trained to be statistically average - and by definition can't make anything exceptional

In operational terms, language models are trained on billions of examples and optimized to predict what comes next based on patterns in that data. They learn to produce statistically likely continuations - outputs that sit near the center of the distribution.

That's the mean.

And here's the problem:

Exceptional output is exceptional because it's rare!

It sits at the edges of the distribution, not the center. It makes unusual choices. It breaks patterns instead of following them.

Affleck isn't wrong about this. He's describing the fundamental constraint of systems that learn from averages: they reproduce averages. Sometimes very competently. But averages nonetheless.

That is why fine-tuning them for your use cases is so very important.

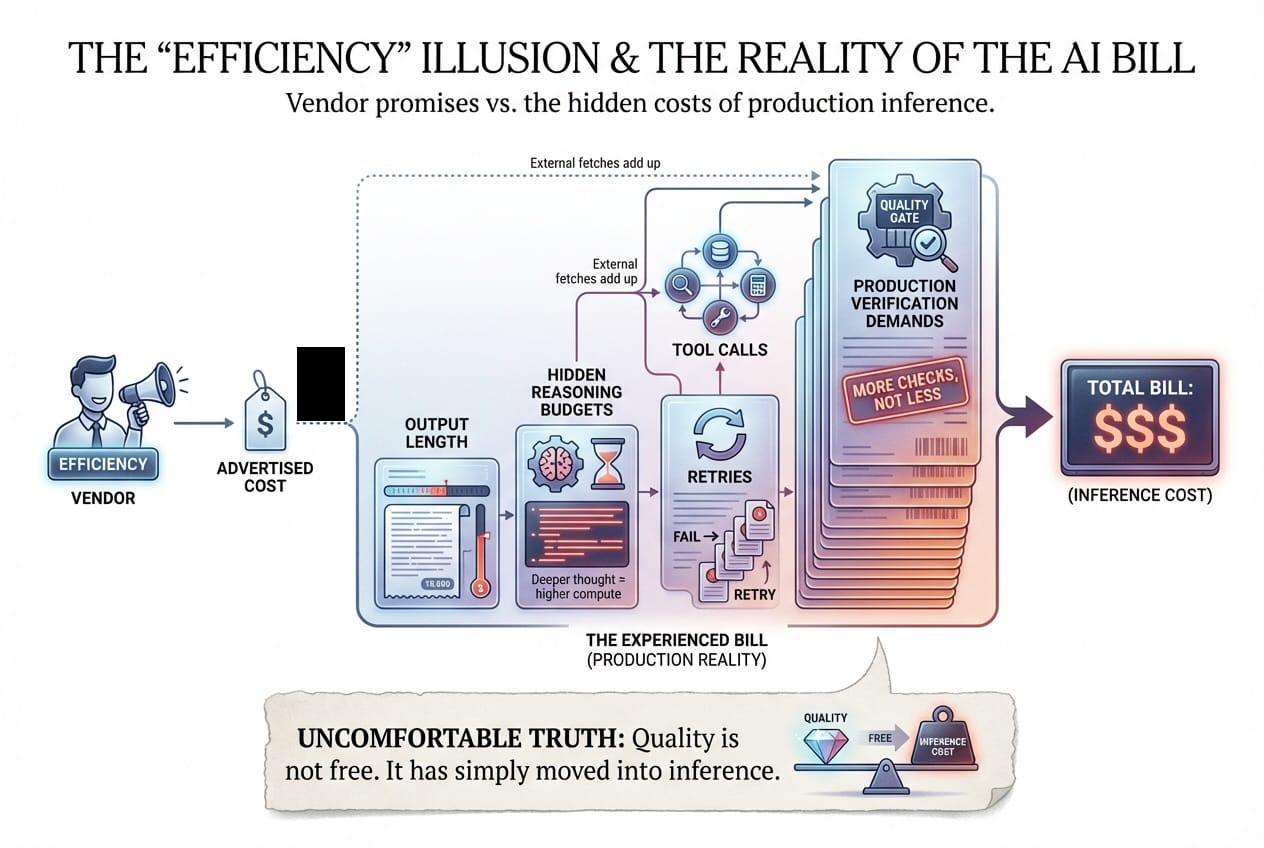

Claim 2: Newer is more expensive

Affleck threw out a rough claim about next-gen models costing multiples more in electricity. The exact multiplier matters less than the direction - and the direction is brutal.

When OpenAI released GPT-5, they framed it as a capability leap: 94.6% on AIME 2025, 74.9% on SWE-bench Verified.

That's the quality curve doing what it does.

But the cost curve climbed with it. A University of Rhode Island AI Lab estimate pegged GPT-5 inference at roughly 18.35 Wh average for a ~1,000-token response - with some responses hitting 40 Wh. Compare that to GPT-4's 2.12 Wh.

That's as much as 8.6× higher.

Even though vendors advertise efficiency, teams experience the bill as a function of:

- output length

- hidden reasoning budgets

- retries

- tool calls

- and the uncomfortable truth that production systems demand more verification, not less.

Quality is not free. It has simply moved into inference.

Claim 3: Adoption will be slow because integration is a bottleneck

Affleck's slow-adoption instinct sounds like skepticism until you see what actually happens when AI meets enterprise reality.

IDC research with Lenovo, summarized by CIO, found that 88% of AI POCs don't make it to widescale deployment. For every 33 pilots launched, only 4 graduate to production.

S&P Global Market Intelligence sharpened the edge: the proportion of companies abandoning most AI initiatives jumped from 17% to 42% in 2025. The average organization scrapped 46% of POCs before they shipped.

Most people believe that when the models get "good enough", adoption would accelerate on its own momentum.

But the reality is that "good enough" doesn't ship by itself.

It collides with data permissions, security reviews, audit requirements, workflow ownership, and the hard fact that humans don't trust systems that can't explain their decisions.

Claim 4: AI is leveling off

Affleck's "plateauing" claim aligns with several top researchers, who have been publicly describing limits to the current pre-training approach.

Due to the diminishing returns from "pretraining with more data", AI models are pivoting towards test-time compute. i.e., more reasoning during inference.

This pivot couples all three curves more tightly:

- If progress moves into inference-time reasoning,

- then quality gains increasingly ride on cost,

- which makes deployment harder to justify.

This is the real pattern Affleck is pointing at, whether he knows it or not:

We are adding capability faster than we are building the operational discipline to afford it, trust it, and integrate it.

What this means for anyone building real systems

If we want AI that survives customers, regulators, and budgets, we build like all three curves are real.

Here's what actually ships:

- Measure cost per correct outcome, not cost per token. Tokens are input. Outcomes are business value.

- Treat reasoning budgets as a first-class resource. "Thinking tokens" aren't magic. They're metered.

- Instrument retries and verification are part of the product. In production, "confidence" is a liability unless you can trace it backward.

- Promote models based on deployment metrics, not benchmark scores. If 88% of pilots die before production, it is a terrible AI strategy.

Long story short,

The future of AI won't be decided by whoever demos the smartest model.

It'll be decided by whoever can keep the meter predictable, the errors traceable, and the integration survivable - while still shipping outcomes that hold up at full production capacity.

Affleck gave us the rough shape. Now the question is whether we build like it's real.